AI and the opportunity for decentralised rails

Why the most impactful technology the world has seen today should be built on decentralised rails

Thanks to Gabor Soter and Naman Gupta, who helped to shore up my (still rudimentary) knowledge of AI and bounce ideas around on this piece. I must note explicitly that this piece does not necessarily reflect their views, but their thoughts were incredibly valued.

In “A changing world order”, Ray Dalio evidences to us, that:

Throughout time and in all countries, the people who have the wealth are the people who own the means of wealth production.

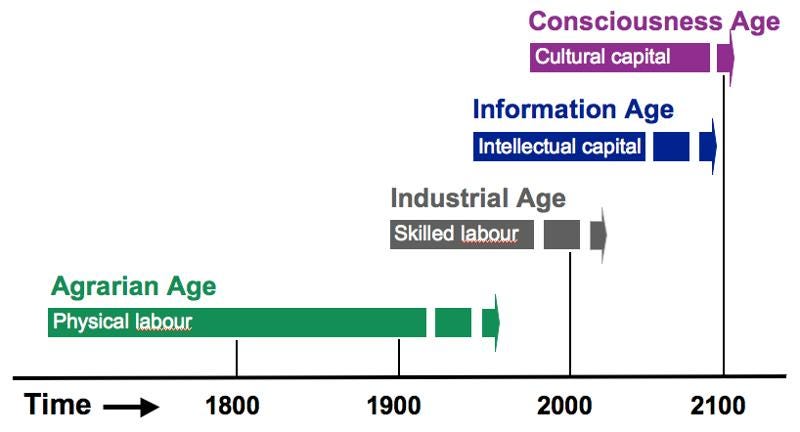

500 years ago, that wealth accrued to the kings and landowners, who owned the assets needed for agriculture - the core means by which humans sustained themselves in that era.

During the industrial revolution, as more utility could be gained through the creation of new product using existing resources, wealth accrued both to those who owned the the machinery that allowed for the creation of more utility from existing resources and those who owned the resources that powered those factories (coal and oil fields).

As we entered the internet era, wealth accrued to those who owned the platforms on which people spent their time. The difference in this case, is that whereas in prior shifts of this kind, there were geographical restrictions that prevented the global monopolisation of industries, the advent of the internet broke those geographic barriers, and allowed for small parties to both reap substantial proportions of the reward for the products that they created, but also exercise a lot of control over these platforms that people spent a lot of time on.

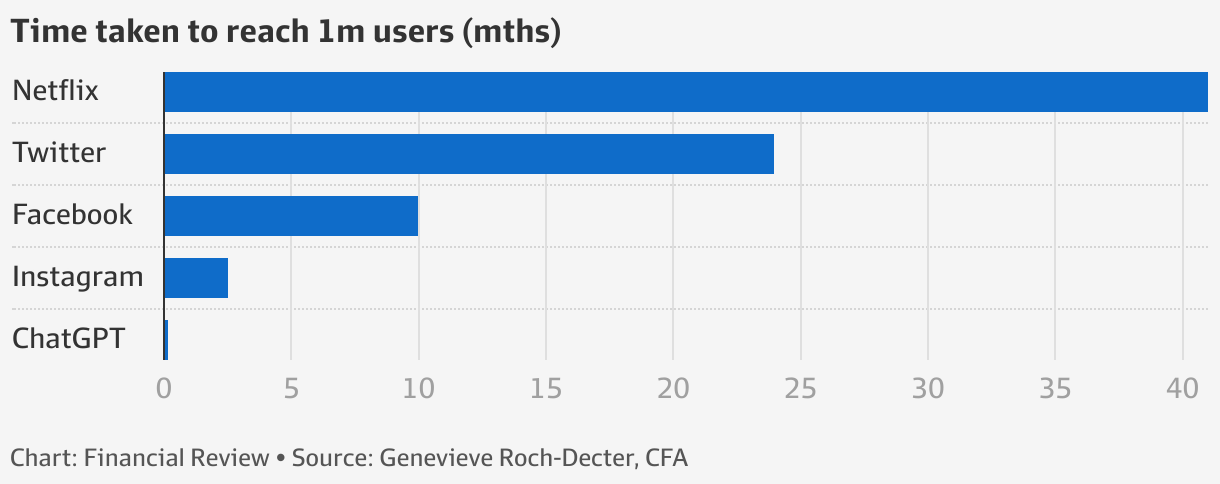

There are few clearer examples of this than the groundbreaking progress to AI over the past years and in particular over the past few months, with GPT3 coming out in June 2020, then DALLE2 coming out in October 2022 allowing users to produce unique high quality images in seconds off of simple prompts, and chat-GPT3 releasing in November 2022 reaching over 1m users within 5 days.

*Stability and midjourney’s models have been equally impressive.

The combination of the power of the technology and the unprecedented pace of it’s development mean that it will be more disruptive to human livelihoods than any technology before it. Where other technological changes have led to a change in the type of work that humans have needed to do, AI has the ability to truly replace the need for human labour at all - and the time at which this change occurs appears to be getting nearer and nearer.

If AI reduces the need for human labour, in it’s current state there is likely to be a substantial increase in inequality and an acceleration in the need for things like universal basic income. However, given the way in which AI leverages the prior and current work of humans, there also should be a more equitable way to reward those who contribute to the outputs achieved.

Here I lay out a short case for the benefits of leveraging decentralised technology in the development of AI models.

Case Study: How Generative AI Works

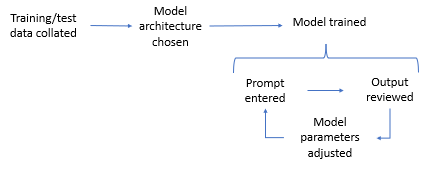

Above is a really simple graphic of the core parts of an AI model. For a slightly more in depth view of this, see this post on how AI Models work. At each of these stages, current or prior human work is leveraged in order to create output:

Training / test data collated:

The data / images used to train the model were likely generated by humans somewhere at some point - note, some cases of synthetic data may have reduced human contact.

Model architecture:

The model would have been built by a team. For example, text-davinci-003 is OpenAI’s most capable GPT-3 model.

Prompt entered:

Humans create prompts for the model to be trained on, or they create the system to automate prompt generation.

Output reviewed:

Humans review the outputs of the model and assess for accuracy, precision and recall. This could be as simple as the model requesting feedback post the creation of the output.

Model parameters adjusted:

Parameter adjustments systems are made to the model likely by the team who created the initial model.

Currently, the steps taken to identify and track the contributors to relevant outputs are either done in a centralised way or not at all. Whereas Stability AI have opensourced their dataset, OpenAI’s remains closed.

Emad Mostaque refers to how Apple have mechanisms for verified content creation. The interview also talks about how Stable Diffusion are partnering with Adobe on content authenticity - a small metadata file that attaches to every piece of content created and its hash. This allows for the detection of any edits made to the content.

This same technology can be used to label the creators of the content that is making up the training data, and to identify the individuals who review the outputs during the test phases that lead to adjusting parameters and a better result. Moreover, outputs can be attributed to all of the contributors who helped to produce them, from training data providers to prompt writers.

Why Blockchain

Leveraging blockchain in this case has a 3 core benefits:

Rails: Ease of reward distribution/attribution

Trust: at scale

Growth: Composability/Interoperability

Rails: Ease of reward distribution

Given transparency and sovereignty of asset ownership, transactions on blockchain remain one of the most efficient mechanisms for distributing rewards. Where paypal has substantial fees that they could change on a whim, and the ability to freeze or seize assets at whim, on chain payments do (*should) not. Where bank transfers are often limited by working hours and subject to heavy fees, blockchain transfers are available as long as there is a suitable party willing to validate the transaction at the relevant cost.

Though there are concerns with using blockchain compared to a centrally run alternative due to poorer UX, less efficient infrastructure and the difficulty rectifying issues as easily, longer term we anticipate substantial progress in infrastructure efficiency and UX which should in turn lead to fewer issues that need rectifying.

Trust: at scale

As adoption of AI grows exponentially, and the size of the inputs also grow, those who own parts of those means of production may be incentivised to train the models to encourage certain behaviour to their own benefit: this could range from pushing certain products all the way through to spreading misinformation to manipulate election results.

Putting this data on chain will allow for more trust at scale, as it will also allow individuals to test systems and identify exactly where problems may be occurring. For example, if there is clear bias in AI models, the training data used that caused the bias can be identified, but also new data can be added to rectify the model to counter the bias.

Growth: Composability / Interoperability

One of the other core benefits of using blockchain to map the components of an AI output is the ability to leverage components of the model more effectively and build on top of existing infrastructure. Leveraging certain training data with a different kind of model or specific scenario in a trusted way will allow for the space to grow faster. It also has huge benefits for finetuning of models.

Finetuning

As the core models improve their precision for broader use cases, over time users go through a process of finetuning - where individuals can add to the model using their own training data set.

This can be really good for application specific usage of AI, or to create a more personalised model for specific individuals or groups of people based on their geography, dialect or even profession - anything that would change dialect enough that a specific data set would improve accuracy of results.

Final Thoughts

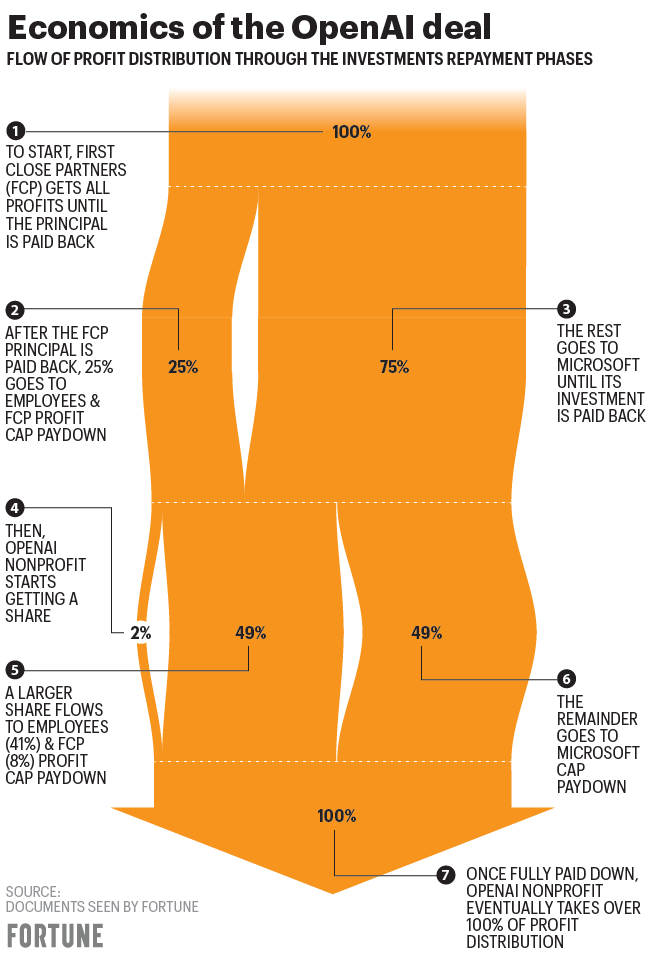

Does the the non-profit nature of OpenAI change the need for this?

Can the same trust be built through well structured centralised systems?

Note: These are just random thoughts I have whilst daydreaming… don’t take them too seriously. Please subscribe, share with a friend or two, and let me know what sort of content you’d like to see next.

Cool references:

Alliance DAO request for AI x Crypto related projects

https://avc.com/2022/12/sign-everything/

Interesting read. AI inequality is concerning, and I'm curious to see how that unfolds.

Do the economics behind these ideas rely on AI systems having highly accurate content attribution cabalities?

Curious about how challenging highly accurate content attribution is in practice. As, I'm sure, it adds extra constraints?